Technologies I Don't Want to Work With Again

Sometimes some tools, frameworks, or languages aren't that great. Some are badly designed and were never a good fit

What makes for likeable tools? #

There are a lot of technologies, tools, frameworks, and programming languages I do like. There are only a few exceptions to this. It’s inevitable that you will eventually come across a tool that is frustrating to use, not suited for it’s intended purpose, or causes too much friction and frustration. This post is about those tools.

Before I discuss what I don’t like, it’s worth visiting what makes something good. I’d say that in order for these tools to qualify as good that it has to meet most of these requirements:

- Good documentation

- Good developer experience

- Provides value without also being difficult or archaic to use

- It aids in the creation of testable and maintainable software and does not make either of these goals impractical

- Reliable, consistent, and repeatable in the sense that it can be depended upon and given the same input the same output is returned

- It does not need an overly involved and complex setup or runtime to work

- Can be installed and/or run on a number of platforms without getting bogged down in complex OS specific minutiae

- It does not take hours or days to get to the point of “hello world”

There’s probably a lot more I could list if I thought about it. I think this is a pretty good set of characteristics though.

What about bad tools? #

I wonder how many of these you, the reader, have experienced. I’d love to hear about it because I’ve probably had experiences I’ve forgotten about! For me, bad tools are pretty much the opposite of all the previous points. If a tool has a couple of good points but mostly bad points, it’s likely still a bad tool.

- Poor, confusing, outdated documentation - Why don’t Linux man pages have examples?

- Bad developer experience - Take, for example, the shitshow that is the Node/NPM ecosystem - npm audit: Broken by Design

- Does not provide value or provides value but is extremely hard to start using

- Cannot be depended or relied upon

- Cannot be installed easily or without excessive configuration and debugging - XKCD’s comic “Python Environment” is exactly what I mean

- It’s mere existence attracts technical debt due to the way it’s expected to be used

The List #

🟡 Would use again but found the experience frustrating or not ideal.

🟠 Will never use again except under extreme or specific circumstances (such as maintaining a legacy project).

🔴 Will never use again under any circumstances.

The list is currently not in any particular order.

Debian 🟡 #

I used to be a user of the Debian distribution for servers and virtual machines but it’s leaders dogmatic and impractical world view became particularly frustrating. My tipping point was when installing Debian on a fairly old Dell laptop. The laptop had Intel Wi-Fi onboard. The particular chipset at the time was extremely common and had/has widespread support across both Windows and Linux.

Debian had installed perfectly fine on the laptop but the then latest major release of Debian decided it would no longer ship with what it likes to call non-free firmware and forced users to instead have to install these drivers after the installation. See the problem yet? Users literally would have no way of installing the drivers to access the internet because they had no way of accessing any network unless they have an ethernet connection available. This is when I realised I didn’t wish to continue supporting such user hostile Stallman-like nonsense. I use Ubuntu in place of Debian for servers and virtual machines now - it ships with firmware/drivers.

Docker (locally) 🟡 #

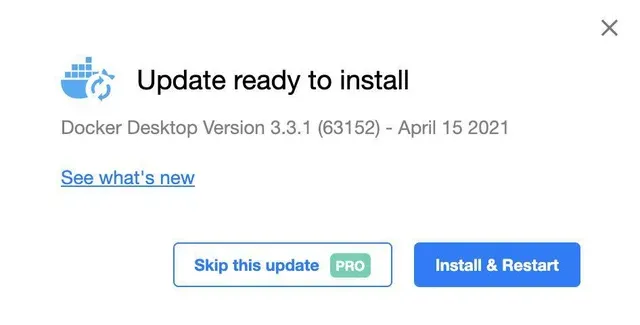

Docker is OK for local development. I can’t deny being able to spin up some quick containers is very useful. However, I found Docker (the company) to be engaging in some morally questionable tactics. There’s a Reddit thread that covers the tipping point for me - requiring users to pay to delay updates. Docker has since removed this malware like feature of their software - but the fact remains they still had this functionality enabled for a considerable amount of time. I try stick to running Docker for deployment now.

Web Components 🟡/🟠 #

Web Components (officially called “custom elements”) are a pretty controversial topic. On one side of the debate they are considered to be an amazing “framework agnostic” approach to making components, and on the other side of the debate they are a painful technology to apply correctly with a series of weird APIs and edge cases.

I would recommend reading the article Maybe Web Components are not the Future? for some background explanation of this debate.

There’s a lot of information out there and I don’t feel like repeating it all here. I’ve used them on and off for a while and some arguments get repeated ad nauseam by people that I believe don’t fully understand the sentences they are repeating. One such common misconception is “it’s future proof as you can use any framework and swap frameworks!”. Here’s why this is a bad faith argument:

- JavaScript UI frameworks solve essentially two problems - binding state to the UI (the DOM, in this case), and reacting to user interactions with the UI. Web Components solve neither of these problems. They are a way to create custom HTML elements that can be used in the DOM with optional encapsulation. They are not a framework.

- Regardless of writing components in Web Components or in a framework, you still need to use a framework to bind state to the UI and react to user interactions. You can’t just use Web Components and expect reactive UI’s to be created automagically.

- If you wish to write vanilla (that is, framework-less code) you will need to create everything in the first bullet point. Whether that is a good idea or not (usually it’s not!) you still need a mechanism to bind state to the UI and react to user interactions with the UI.

- So in essence whether you use web components or not you still need the same base functionality. Most of the time you will choose to use a framework. For web components, one very popular framework is Stencil. It provides everything you’d expect of a traditional framework: TypeScript, JSX/TSX, rendering, functional components, state management, and event handling.

- So by saying “it’s future proof and you can swap frameworks” you need to ask which framework are you referring to? The framework the web components are written in or the framework the application using the web components is written in?

- If you swap the framework used in the web components, you are practically going to be rewriting the components anyway. What was future proof about that? This seems like normal refactoring and upgrades in an application lifecycle so far to me.

- If you swap the framework used in the application then sure your web components could remain written in the same framework but… what is different to writing a normal component in X framework and hosting it in framework Y? That’s pretty straightforward.

- You’re probably next going to say “Haha! But if you host components written in framework X inside application using framework Y, then you’ll have a terrible time integrating and synchronising state and the API might be awkward”.

- Well - how is that literally any different from what happens when we host a web component inside an application anyway?

- In either approach, wrangling of state, events, and other synchronisation concerns still happens. I would argue it happens more with web components because of the fact that attributes are not the same as properties

I hoped Web Components would take off. (I even wrote a course on them).

— Cory House (@housecor) September 14, 2022

They didn’t.

Why?

Because frameworks frequently innovate and improve. Standards can’t do that.

So, the Web Components API and ecosystem is inferior to today’s modern JS frameworks.

ASP.NET MVC 🟠 #

I want to make it clear that I regularly use .NET, it’s my favourite ecosystem, I’m just specifically ranting about an aspect of it’s web support.

This one takes a bit of explaining because of Microsoft’s well known inability to name things well. When I say “ASP.NET MVC” I’m referring to two parts - ASP.NET before ASP.NET Core existed and also MVC itself. I spent several years using (and regretting using) ASP.NET MVC. While creating maintainable software with ASP.NET MVC is not impossible, it’s proponents and community sure does a good job of pretending that’s impossible. The situation improved marginally alongside the release of .NET Core. For example, View components and tag helpers were created.

Laughably, for seemingly the first time since the original release of ASP.NET MVC over a decade ago, the Visual Studio tooling for it’s view files finally had some improvements. From what I can tell, the original tooling was indeed written that long ago and then abandoned despite being shipped in multiple versions of Visual Studio ever since. The new tooling was introduced in Visual Studio 2022. I’m not sure what’s worse - the fact that for more than a decade the previous bad tooling was the only choice for creating views in ASP.NET MVC or that the developers using it didn’t even realise how bad things really were. Seriously, formatting the file was likely to result in some crazy indentation and false positive error messages.

I’m not sure why it lends itself to generating spaghetti code so much, but ASP.NET MVC codebases always seem to be suffering from bad documentation, little to no structure, poor unit tests, and often created by “we’ve always done it that way” teams.

What I do like to use, however, is .NET and ASP.NET Core. If the naming here is confusing in any way, try have a conversation with other .NET developers and you’ll see that even the official documentation doesn’t explain it well at all.

Jira 🔴 #

The tool of choice for micromanagers and Agile ™ enthusiasts. As you may know from my previous post most organisations are “doing Agile” instead of being agile. Atlassian seems to have captured the market for this.

jQuery 🔴 #

There is no reason to be still using jQuery for new projects. There are far better and more cohesive libraries and tooling available - including none at all - “vanilla”. Whether you still want DOM-coupled state or conventional component based frameworks there’s so much choice. Often people were/are building jQuery based applications or lots of interactive content containing state which I think is the real problem. In my experience every project that heavily used jQuery was a complete mess.

Here’s some better alternatives:

- Purely “vanilla”

- RE:DOM

- Mithril

- HTM

- VDOM (Virtual DOM) is a concept core to many frameworks and libraries - two popular options are snabdom and virtual-dom (if you’ve seen the

h()convention for render functions, this is where it came from) - Alpine - DOM focused but at least allows state to be handled in a cleaner way

- HTMX - A unique take that really fits into it’s own category

- One of the component based frameworks like Vue, React, Preact, and Solid

Nuxt 🔴 #

Vue is an… okay framework (well, not really). The Nuxt framework that builds on top of it - not so much. I originally made an early version of this site with it but soon realised that it was going to be a huge pain and source of technical debt going forward. The configuration was arbitrary, Nuxt module configuration expecting to be in a different location to the configuration for other Nuxt modules was one of my most frustrating experiences. The beta release for Nuxt 3 was more like an alpha quality release - it was not tested on Windows and couldn’t run on it for several weeks - despite being written in Node. Strange.

Following on from that, it’s official Discord community is less than helpful with the typical “moderator of online community power tripping” nonsense leading to a fairly negative experience trying to engage with the community - this is mainly down to a couple of particular individuals within the Nuxt team. To the extent that common internet abbreviations even trigger a ridiculous automatic “warning” - I wish I was exaggerating.

I’ve been on the internet a while and witnessed total stupidity across IRC, IM, social media, forums, but this one really sticks out. For this reason alone I chose not to support such behaviour and rewrote my site with another framework. There’s also the support aspect - most questions asked by anyone are ignored by the Nuxt team. Making abbreviation triggered bots is important though…

Angular 🔴 #

I simply cannot bear to work in an Angular project. To be clear, I’ve used multiple frontend frameworks and Angular remains the most painful and least productive option. I personally believe many organisations choosing Angular “because it’s a whole framework” are very misguided. I cannot think of a worse choice, other than jQuery, for a new project with developers new to web development.

- It’s surprisingly common to see Angular builds failing due to it consuming too much memory. Yes, that’s right - the only frontend framework that requires it’s users to change Node’s default memory constraints with the

max-old-space-sizeflag. This appears to be a known problem but no desire to resolve it. This should be raising alarm bells for anyone considering Angular. - It’s just so slow to build. On average I can start and stop the CLI/dev server for other frameworks multiple times in the time it takes Angular dev server to even start serving content to the browser.

- The styling situation. The Angular team seems to assume (if not insist) that you will use CSS or SCSS. But what about CSS in JS/TS, a common development practice in React? Nope! Now your ability to design composable, maintainable, and type-safe design systems with Angular is extremely limited.

- Angular’s default unit testing creates a browser based testing setup with Chromium. Components shouldn’t have to be tested in a real running browser - that’s what integration tests are for. Other frameworks use Jest, Vitest, etc.

- The pool of good software developers with Angular experience is very small. This is not only a cause of concern when it comes to hiring, but also for the quality of the code in the project. This is a recipe for disaster. One amusing scenario I encountered recently was a group of alleged “Angular experts” that had not used NPM before.